In the past two years, there has been an emphasis on Test Driven Development in Design Studio.

There seems to be a misconception about the past, though: it’s sometimes spoken as if testing was not part of DS previously. This is untrue – no team fired up notepad, wrote some code, and turned it over. Instead, “testing” has come to mean “having a robust automated test suite.” That’s great, and it is amazing that so many more teams are building those suites now. But we also have to remember it isn’t the only part of how we should test software.

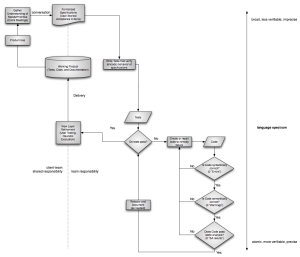

Below is my first draft of a process-style diagram of how things “should be” in our process. It’s far too big to fit in here at 100% size, so click through to see it in all its glory. I’m looking for any thoughts or corrections to it before I make a final infographic-style rendition.

A few things to note:

- The axes. Horizontally, the client-team responsibility levels: The further an item is to the right, the less directly the client is involved. Vertically, the “language spectrum”: The further towards the bottom, the simpler it usually is to verify a typical statement in the language involved. I will have to enumerate the various levels later, but “Vision” would be at the top and most project-specific, and something like machine code at the bottom being least project-specific.

Let me know what you think!